Lakehouse, mesh, fabric, and hub architectures designed for enterprise scale

What is Modern Data Architecture?

Modern data architecture is the design pattern by which an enterprise organizes its data platforms, processing engines, governance controls, and consumption interfaces to support analytics, AI applications, and real-time decision-making at scale. It moves beyond the centralized data warehouse model toward distributed, governed, and consumption-oriented designs that match how data is actually produced and used.

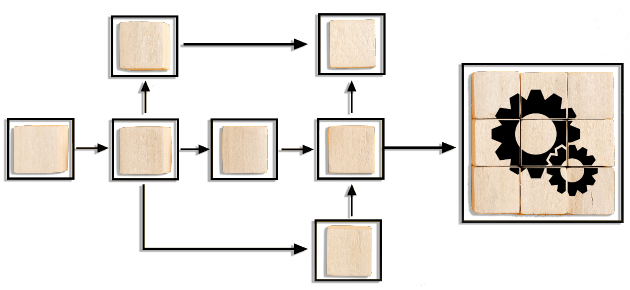

The core shift in modern data architecture is from a single monolithic platform to a federated approach where different patterns serve different needs – a lakehouse for analytics and ML, a data fabric for cross-domain integration, a data mesh for domain-driven ownership, or a hub model for shared services. Most enterprises end up running a hybrid of these patterns based on their data domains and consumer needs.

Infocepts’ modern data architecture consulting services help data leaders design and implement architecture that fits their business – not generic reference architectures pulled from vendor whitepapers. We assess your current state, model your future workloads, and design an architecture you can actually build with your team, tools, and budget.

Optimal Data Architecture

Infocepts offers expert modern data architecture consulting to help you design and implement the ideal data architecture tailored to your business needs. We assess your current data ecosystem, align it with your future goals, and efficiently implement modern data architecture using automation. Our commitment is to future-proof your data strategy, seamlessly integrating new concepts without disrupting your operations.

Our Modern Data Architecture Services

Infocepts designs and implements five modern data architecture patterns, individually or in hybrid combinations.

Data Fabric

A data fabric is a unified architecture layer that abstracts data sources, applies consistent governance, and serves multiple consumption patterns through a single interface. We design data fabric implementations using metadata-driven integration, active metadata, and AI-powered automation.

Data Lakehouse

A lakehouse combines the openness and scale of a data lake with the transactional reliability and performance of a data warehouse. We implement lakehouses on Databricks, Microsoft Fabric, Snowflake, and open-source platforms using Delta Lake, Iceberg, or Hudi.

Data Hubs

A data hub is a centralized integration and distribution layer for shared data domains. We design hubs that unify customer, product, supplier, or financial data across the enterprise, with strong governance and consumer-friendly access patterns.

Full Stack Data Products

A data product is a discoverable, governed, and reusable data asset designed for a specific consumer use case. We help enterprises shift from project-based data delivery to product-based data delivery, including ownership models, SLAs, and lifecycle management.

Data Mesh

Data mesh is a decentralized architecture where data is owned and served as products by domain teams, with a federated governance layer ensuring interoperability. We help enterprises transition from centralized to mesh patterns where domain ownership and scale demand it.

Accelerate Data Movement with

Infocepts Real-Time Data Streamer

Infocepts’ Real-Time Data Streamer (RTDS) accelerates your modern data architecture build, offering unparalleled speed and efficiency in data integration. It breaks through traditional data bottlenecks, aligns with modern architectures, and enables swift data access through features like CDC, query, and API-based ingestion. RTDS transforms data movement, connecting source and analytics platforms in real-time, enhancing responsiveness and agility. Learn More

Infocepts’ Real-Time Data Streamer has become a central part of our strategy for collecting data within the organization. It has changed our systems approach from a “once-a-day batch mentality to “all-data-in-real-time” mentality.

Senior Director of Data & Analytics

Fortune 100 Company

FAQs

What is the difference between a data fabric and a data mesh?

A data fabric is a technology-led architecture that uses metadata, automation, and integration to unify data across systems while keeping data physically distributed. A data mesh is an organizational and architectural pattern that distributes data ownership to domain teams, with shared governance. Fabric is what; mesh is who.

What is the difference between a data lake and a data lakehouse?

A data lake stores raw data in any format but lacks the transactional reliability and performance for analytical workloads. A data lakehouse adds ACID transactions, schema enforcement, and query optimization on top of lake storage, giving you warehouse-like reliability with lake-like flexibility and cost.

How do I choose between Snowflake, Databricks, and Microsoft Fabric?

Snowflake excels at SQL-driven analytics for business teams. Databricks is stronger for data science, ML engineering, and complex transformations. Microsoft Fabric is the best fit for enterprises already standardized on Microsoft. Many enterprises run more than one for different workloads.

How long does a modern data architecture project take?

Architecture design and reference implementation: 3 to 6 months. Production rollout for a single major workload: 6 to 12 months. Enterprise-wide adoption typically runs 18 to 36 months in waves.

Can I implement modern data architecture without replacing my existing platforms?

Yes. Most enterprises layer modern architecture on top of existing platforms, using fabric or hub patterns to integrate without immediate replacement. Replacement happens gradually as workloads migrate.

What is the ROI of modern data architecture?

Mature implementations typically deliver 40 to 60 percent faster delivery of new analytics use cases, 30 to 50 percent reduction in platform TCO over 24 months, and the foundation required for enterprise AI and ML deployment.